WAL/DB Configuration in eEKAS (Ceph OSD Optimization)

Overview

In eEKAS, WAL (Write-Ahead Log) and DB (RocksDB metadata) are critical components of Ceph OSDs (Object Storage Daemons). They are used to optimize write performance and metadata handling within the storage cluster.

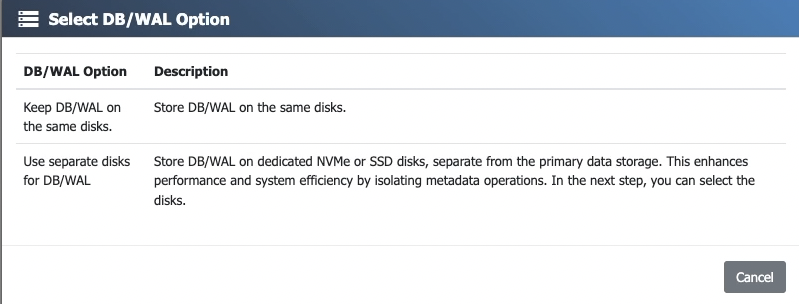

By default, these components can reside on the same disk as the OSD data. However, eEKAS allows the use of dedicated WAL/DB devices, which can significantly improve performance and consistency, especially in hybrid storage configurations such as HDD-based OSDs accelerated by SSD or NVMe media.

Architecture and Function

WAL (Write-Ahead Log)

The WAL is responsible for temporarily recording write operations before they are fully committed. This helps protect consistency during unexpected interruptions and can reduce write latency by handling writes in a more efficient sequence.

DB (RocksDB Metadata)

The DB stores metadata required by the OSD, including object indexes, placement group information, and other internal structures needed for fast lookup and efficient operation.

Operational Flow

- A write request is received by the OSD.

- The operation is first recorded in the WAL.

- Metadata is updated in the DB.

- The data is then committed to the main OSD storage.

Benefits of Dedicated WAL/DB Devices

Performance Improvements

- Reduced latency: Faster acknowledgment of write operations.

- Improved IOPS: Especially beneficial for small random write workloads.

- Optimized use of HDDs: Data disks handle bulk storage, while SSD/NVMe devices handle metadata and log activity.

- More consistent behavior under load: Particularly important in busy clusters and mixed workloads.

Cluster Efficiency

- Improved responsiveness during rebalance and recovery operations

- Better performance for metadata-heavy workloads

- Particularly useful for erasure-coded pools and object storage deployments

Configuration in eEKAS

Disk Selection Rules

When configuring WAL/DB devices in eEKAS, you must select either 2 or 4 disks per node.

- 2 disks: eEKAS creates RAID 1

- 4 disks: eEKAS creates RAID 10

Important: WAL/DB devices contain critical metadata and write-state information for the OSDs using them. If these devices fail without proper redundancy, all data on the affected OSDs will be lost. For this reason, WAL/DB must always be protected by RAID.

Sizing Guidelines

To simplify planning, use the following generous sizing guideline for dedicated WAL/DB capacity:

Minimum recommended WAL/DB capacity = Number of OSDs on the node × 50 GB

Formula:

WAL/DB capacity per node = OSD count per node × 50 GB

Recommendation: This is a generous minimum guideline intended to provide sufficient headroom for metadata growth, recovery activity, and metadata-heavy workloads. Larger sizing may be beneficial for object storage, erasure coding, or environments with many small files or objects.

Sizing Examples

- 8 OSDs on one node → minimum 400 GB WAL/DB capacity

- 12 OSDs on one node → minimum 600 GB WAL/DB capacity

- 24 OSDs on one node → minimum 1200 GB WAL/DB capacity

When selecting 2 or 4 WAL/DB disks, ensure that the resulting RAID 1 or RAID 10 usable capacity is large enough to cover the calculated requirement for that node.

Risks and Considerations

Without Proper Protection

- Loss of WAL/DB means loss of critical OSD metadata

- Affected OSDs become unusable

- All data stored on those OSDs is lost

- Cluster recovery requires OSD recreation and data rebalancing

Misconfiguration Risks

- Using slow disks for WAL/DB reduces or eliminates the intended performance benefit

- Undersized WAL/DB capacity can reduce performance and operational headroom

- Mixed device classes can lead to inconsistent behavior

Best Practices

- Use enterprise-grade SSD or NVMe devices

- Prefer low-latency, high-endurance media

- Keep device selection consistent across nodes where possible

- Plan extra headroom for metadata-heavy workloads such as S3 object storage, erasure coding, and high object counts

Summary

Dedicated WAL/DB devices in eEKAS provide an important performance and reliability enhancement for Ceph-based storage clusters. By offloading metadata and write-log activity to fast media, they improve responsiveness and operational consistency.

Because these devices store critical OSD metadata, they must always be protected by RAID. As a practical and generous planning guideline, allocate at least 50 GB of WAL/DB capacity per OSD on each node.